Hello,

I have trained and pruned a model in TF to deploy it to a Raspberry Pi Pico. So, I have converted that model to TF Lite using this optimization “converter.optimizations = [tf.lite.Optimize.EXPERIMENTAL_SPARSITY]” (as it shown in Pruning for on-device inference w/ XNNPACK | TensorFlow Model Optimization). The problem is that this optimization includes an operation called Densify, which I believe it is not implemented in TF Lite Micro. Note that pruned models important with this kind of devices that have very limited resources.

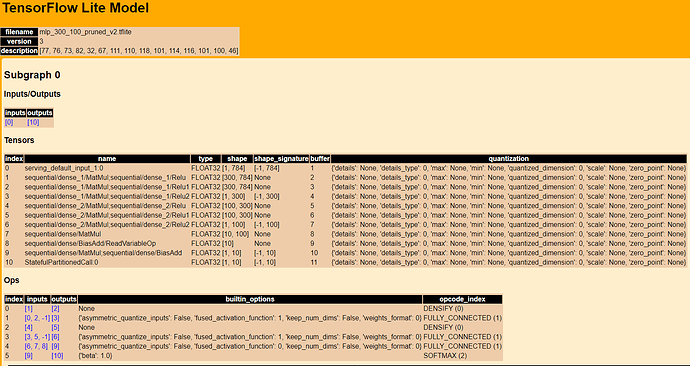

This is the model: (300,100,10)

Has anybody experienced this issue? Is there any solution?