Hello,

I’m deploying a Tensorflow Lite model on some UAV hardware called the VOXL 2. The manufacturers of this hardware have created a custom package called “voxl-tflite-server” to enable Tensorflow Lite support via the C++ API. More info can be found here. There are several TFLite models that are prepackaged with their software that work well while taking advantage of the CPU and GPU. I need to retrain a keypoint detector on a new object and deploy the keypoint detector on this board.

So I downloaded the “CenterNet MobileNetV2 FPN Keypoints 512x512” model from the TF2 Object Detection Zoo and customized the voxl-tflite-server software to load this model and run inference on a set of images. In this model, there are a few operations which are not supported; however, 120 operations are delegated to the GPU, and the remaining are delegated to the CPU. The model runs inference on the input images with no issues and low latency.

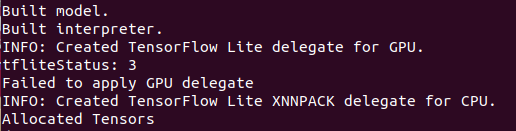

My problem: Using the Object Detection API, I retrained the “CenterNet MobileNetV2 FPN Keypoints 512x512” model to detect a new class’s bounding box and keypoints but the GPU fails to apply as shown below

With CPU only, the model detects keypoints well, but we want to improve the inference speed, hence the need for GPU acceleration.

I looked into the meaning of tfLiteStatus = 3: kTfLiteApplicationError : Delegation failed to be applied due to the incompatibility with the TfLite runtime, e.g., the model graph is already immutable when applying the delegate. However, the interpreter could still be invoked.

The only thing I could muster from this error message is that the model graph is immutable, but the subgraphs can still be used; and for some reason this prevents the GPU delegate from being applied. Can anyone explain in more detail this error and how to avoid it?

Other things I’ve tried:

- Post-training quantization (float16),

- Other models (CenterNet HourGlass104 Keypoints 512x512 from TF2 Detection Zoo),

- Specific input sizing prior to conversion from Tensorflow to TFLite (input can be [1,?,?,3], tried specifying as [1,256,256,3] or [1,512,512,3]),

- Representative dataset during conversion (images are a [1,height,width,3] uint8),

- Other versions of Tensorflow; tried 2.13, 2.10, 2.8 for both training and conversion.

Thanks!