Hello everyone, I’m trying to adapt Nic Renottee Build a Deep CNN Image Classifier with ANY Images

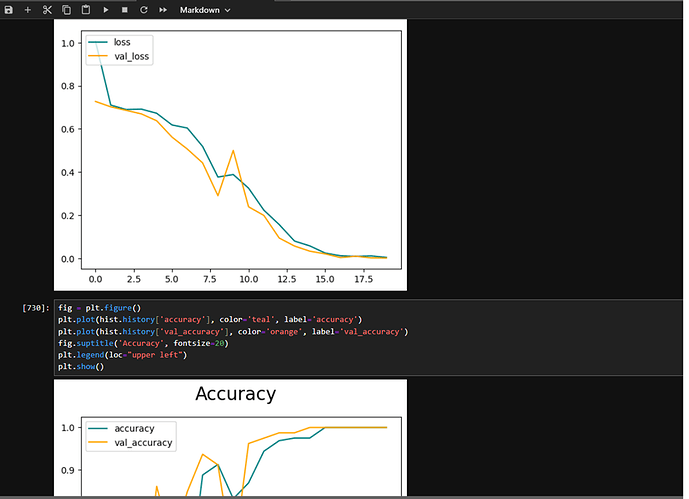

to a simple model. The model trains well and accuracy goes up to one.

However, when I try to evaluate it, I’m getting zero values.

The valuation batch size = 1

I’m doing this:

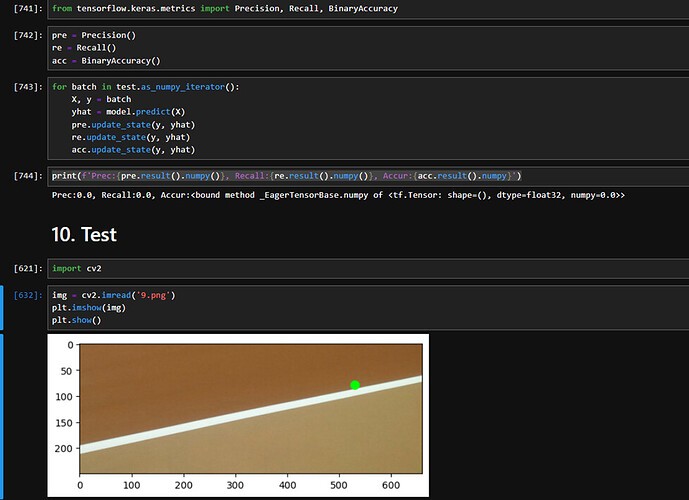

from tensorflow.keras.metrics import Precision, Recall, BinaryAccuracy

pre = Precision()

re = Recall()

acc = BinaryAccuracy()

or batch in test.as_numpy_iterator():

X, y = batch

yhat = model.predict(X)

pre.update_state(y, yhat)

re.update_state(y, yhat)

acc.update_state(y, yhat)

print(f’Prec:{pre.result().numpy()}, Recall:{re.result().numpy()}, Accur:{acc.result().numpy}')

And getting this:

Prec:0.0, Recall:0.0, Accur:<bound method _EagerTensorBase.numpy of <tf.Tensor: shape=(), dtype=float32, numpy=0.0>>

while my accuracy is looking okay:

Any ideas?

Thank you in advance.

When encountering an issue where your model’s Precision, Recall, and Accuracy metrics are returning zero values during evaluation, despite the model training seemingly well with high accuracy, there are several common areas you should investigate:

1. Thresholding of Predictions: The default threshold for binary classifiers in TensorFlow is 0.5. Ensure that your model’s predictions (yhat) are being thresholded correctly before comparing them with the true labels (y). If your model outputs probabilities close to the threshold but doesn’t surpass it, it might lead to zero Precision and Recall.

2. Data Imbalance: If your dataset is highly imbalanced, with a significant difference between the number of examples in each class, the model might learn to predict the majority class for all inputs, leading to a high accuracy but low Precision and Recall for the minority class.

3. Model Predictions: Verify that your model is making varied predictions and not outputting the same value (e.g., all zeros or ones) for every input. Constant predictions can lead to zero Precision and Recall if the constant prediction does not match the true labels.

4. Label Encoding: Ensure that your labels (y) are correctly encoded. For binary classification, labels should typically be 0 or 1. Incorrect label encoding could lead to mismatches between predictions and true labels.

5. Metric Calculation: In your code snippet, there seems to be a typo in the print statement for accuracy. Instead of acc.result().numpy(), it should be acc.result().numpy(). The current code is printing the method, not its result.

6. Evaluation Batch Size: While evaluating with a batch size of 1, small numerical instabilities or rounding errors can have a more significant impact. Try evaluating with a larger batch size to see if there’s a difference.

7. Model Output Layer: Confirm that the output layer of your model is appropriate for a binary classification task. Typically, this would be a single neuron with a sigmoid activation function. If your model’s output layer does not match this structure, it might not be outputting probabilities suitable for binary classification metrics.

8. Metric Update State: Ensure that pre.update_state(), re.update_state(), and acc.update_state() are called correctly within the loop. It seems correct in your snippet, but double-check for any possible issues in how the loop is structured or how the data is fed.

If after checking these areas the issue still persists, consider creating a minimal reproducible example and seeking further assistance, possibly by sharing more code or details about your model architecture and dataset.

Thank you @Tim_Wolfe

I checked the points above and fix the acc.result() print issue. Still getting zero.

The issue is reproducible. I uploaded it to here: tennis.rar - Google Drive

It has 1 Tennis.ipynb notebook and the data to classify is the ‘tennis’ folder. It has 2 labels: in_small and out_small.

I hope you can assist me further.

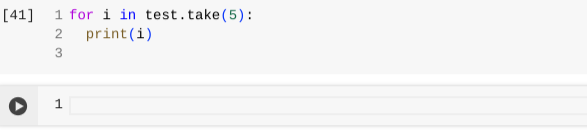

Hi @Rebecca_Armitage, I have gone through your code and I have tried to see the data in the test dataset using

and found there is no data in the test dataset. That is the reason you are getting 0 while calculating precision and recall. could you please try by splitting the data set properly. Thank You.

Hey, @Kiran_Sai_Ramineni and thank you.

I incrased the test data blocks by 1 and now getting the desired results:

test_size = int(len(data)*.1)+2

Prec:1.0, Recall:1.0, Accur:1.0