Last April 2023, I tried customizing an activation function and one of the problems that I’ve encountered is that even though it works during training every time I load the h5 file I encountered a deserialization error because of the customized activation function. Although it is resolved using the codes from my previous inquiry because the issue is more like an unupdated Tensorflow documentation about object customization. Here is the link: Customizing activation function and loading saved H5 file issue - General Discussion - TensorFlow Forum

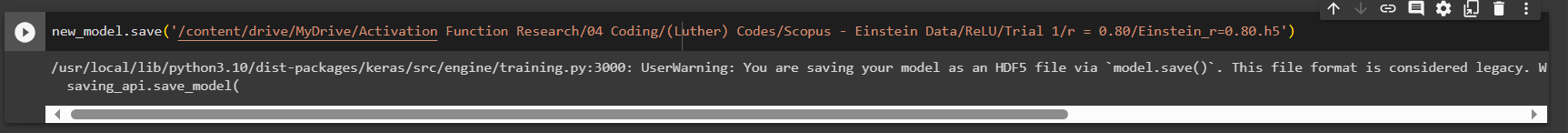

This time I encountered the same problem but I find some unusual message as I save my model into an h5 file using “model.save()” which says “/usr/local/lib/python3.10/dist-packages/keras/src/engine/training.py:3000: UserWarning: You are saving your model as an HDF5 file via model.save(). This file format is considered legacy. We recommend using instead the native Keras format, e.g. model.save('my_model.keras').

saving_api.save_model(”

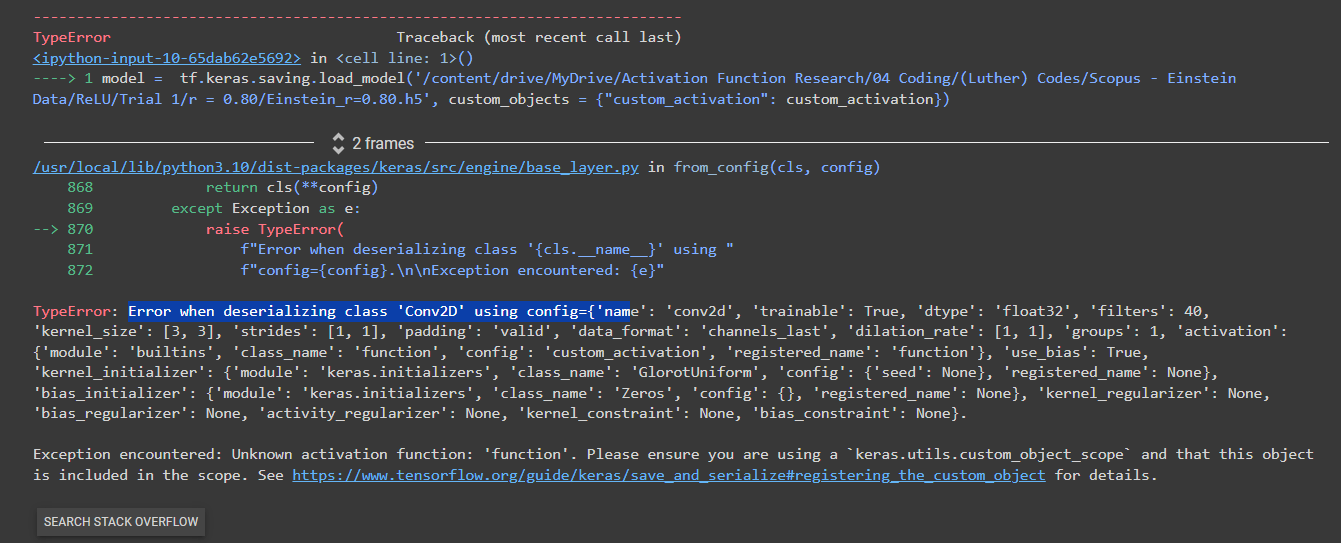

and as I try to load my saved h5 file with my customized activation function using “tf.keras.models.load_model” then I encounter this TypeError message again about the error when deserializing a class in my h5 file.

Can anyone give me a sample code of how to customize an activation function properly and how can I solve my current issue in opening the h5 file with the customize activation function?

I would appreciate any comments, suggestions, etc… Thank you!