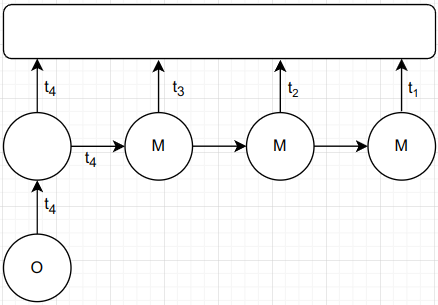

I trying to implement a simple TDNN-kind of functionality.

Collecting output of a pre-trained model, and unroll it to over N steps.

It acts like a cache or memory (hence the “M” in the figure), but it is not recurrent (output not fed to the input). Something like keras.utils.timeseries_dataset_from_array, but the input is collected over consecutive ouputs of a layer/net.