Blog post: Google AI Blog: Model Ensembles Are Faster Than You Think (10 November, 2021)

Conclusion

As we have seen, ensemble/cascade-based models obtain superior efficiency and accuracy over state-of-the-art models from several standard architecture families. In our paper we show more results for other models and tasks. For practitioners, this outlines a simple procedure to boost accuracy while retaining efficiency using off-the-shelf models. We encourage you to try it out!

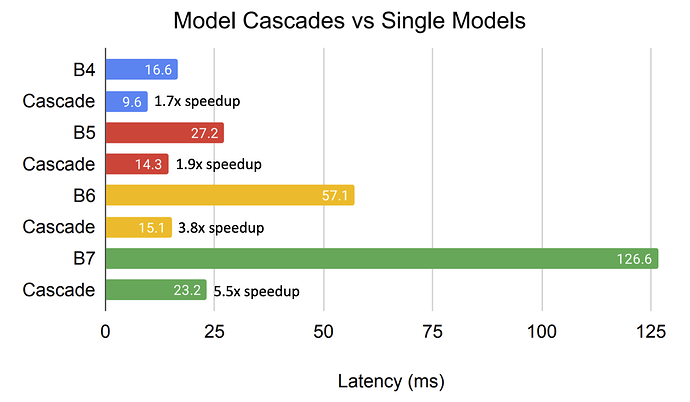

(Ensembles and cascades - 2-model combinations for both ensembles and cascades - from the blog post)

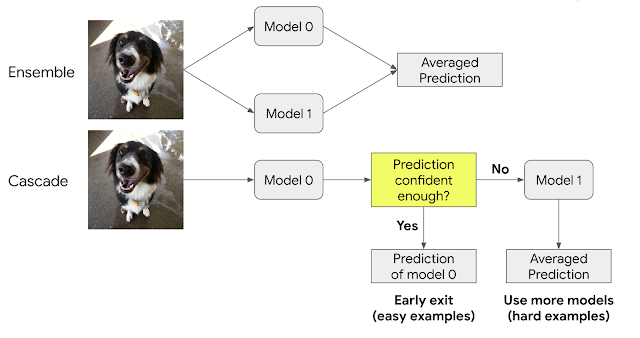

(Inference latency - Model cascades vs single models - Average latency of cascades on TPUv3 for online processing - from the blog post)

Paper (arXiv): Wisdom of Committees: An Overlooked Approach To Faster and More Accurate Models

We show that even this method already outperforms state-of-the-art architectures found by costly

neural architecture search (NAS) methods. Note that this method works with off-the-shelf models

and does not use specialized techniques……Our analysis shows that committee-based models provide a simple complementary paradigm to achieve superior efficiency without tuning the architecture. One can often improve accuracy while reducing inference and training cost by building committees out of existing networks.

… We show that committee-based models, i.e., model ensembles or cascades, provide a simple complementary paradigm to obtain efficient models without tuning the architecture. Notably, cascades can match or exceed the accuracy of state-of-the-art models on a variety of tasks while being drastically more efficient. Moreover, the speedup of model cascades is evident in both FLOPs and on-device latency and throughput. The fact that these simple committee-based models outperform sophisticated NAS methods, as well as manually designed architectures, should motivate future research to include them as strong baselines whenever presenting a new architecture. For practitioners, committee-based models outline a simple procedure to improve accuracy while maintaining efficiency that only needs off-the-shelf models.